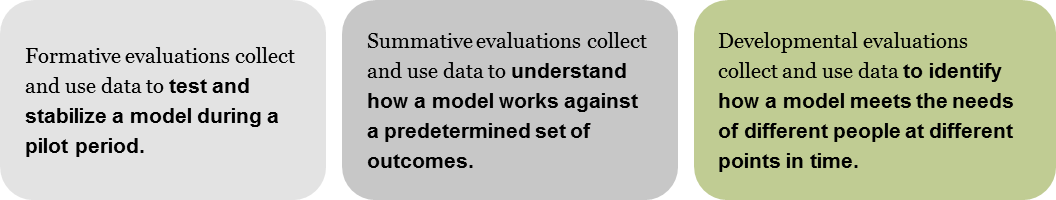

Developmental Evaluation: What It Is & When to Use It

When complex, external factors—ranging from policies and economic shifts to technological advances and environmental changes—come crashing through a program’s implementation, data from a traditional, summative evaluation can become obsolete and even counter-productive. Enter developmental evaluations, which deliver feedback on how an intervention fits within the broader system it’s trying to affect and, in turn, reveal pathways for change that more accurately address the roots of a community’s complex needs.

Developmental evaluations are particularly useful in complex environments where:

- Actions and results of actions are non-linear, and have multiple, compounded effects

- Patterns emerge, but usually without one, definitive reason

- Dynamic interactions among actors have varying degrees of intensity and regularity

- Actors adapt as they respond and interact with others

- Processes are not certain to produce particular outcomes

- Interdependent actors co-evolve alongside one another

Complex environments require implementers to rapidly adapt their strategies on an ongoing basis to respond to these dynamic conditions. So, rather than focus on achieving a specific set of outcomes, developmental evaluations deliver information and learning that furthers implementers’ understanding of their role in the complex environment and the way their intervention affects that environment. Implementers may discover, thanks to data from a developmental evaluation, how their work spans beyond the realm of an intervention’s control; the intervention then relies on the ripple-effect mechanics of the system to carry its impact as far as possible.

To this end, the data collection and analysis for developmental evaluations first prioritizes context over outcomes. This doesn’t mean developmental evaluations can’t measure outcomes. Rather, developmental evaluations are very much alive and growing with the initiatives they assess; as the initiative evolves, so too does the evaluation, such that a developmental evaluation can eventually begin to inform progress toward outcomes, once enough is known about context and external variables.

Research methods in developmental evaluations can stray from conventional data collection. For example, in order to ensure that a survey provides useful data that most accurately describes systemic conditions, stakeholders may weigh in on wording of a question they’ll ultimately be answering. Or, rather than conducting a focus group with pre-formulated questions, initiative leaders themselves might gather stories and anecdotes from different groups of stakeholder, and then create a systems map based on how those stories relate and connect to one another. As evaluators, we add rigor to this work of learning by guiding these techniques and methods to elicit useful and relevant data for timely decision-making. We also help implementers process each bit of feedback and incorporate it into their strategy, even helping them share evaluation findings with the community that informed those findings in the first place.

More than any other evaluation approach, developmental evaluation requires a healthy dose of humility—an admission that we can’t possibly predict every outcome of our clients’ work, nor is there one silver bullet for solving system-wide imbalances and inequities. The emergent nature of a new initiative in a complex environment also means that a big part of developmental evaluation is a readiness for surprise, i.e., expecting the unexpected. For those involved in making decisions about an initiative and implementing it, this presents a challenge of balancing an overarching vision with the reality of what is happening before them—often with unintended outcomes and byproducts. Paired with a spirit of curiosity, the learning from developmental evaluation can reveal insights that might never have been imagined, and that lay a track to realizing positive change.

Want to delve deeper into developmental evaluation? Michael Quinn Patton’s book, Developmental Evaluation. Applying Complexity Concepts to Enhance Innovation and Use is a great place to start (it informed this blog post and is a trusty companion to us during developmental evaluations), along with resources at BetterEvaluation. And as always, feel free to leave comments or drop us a line.